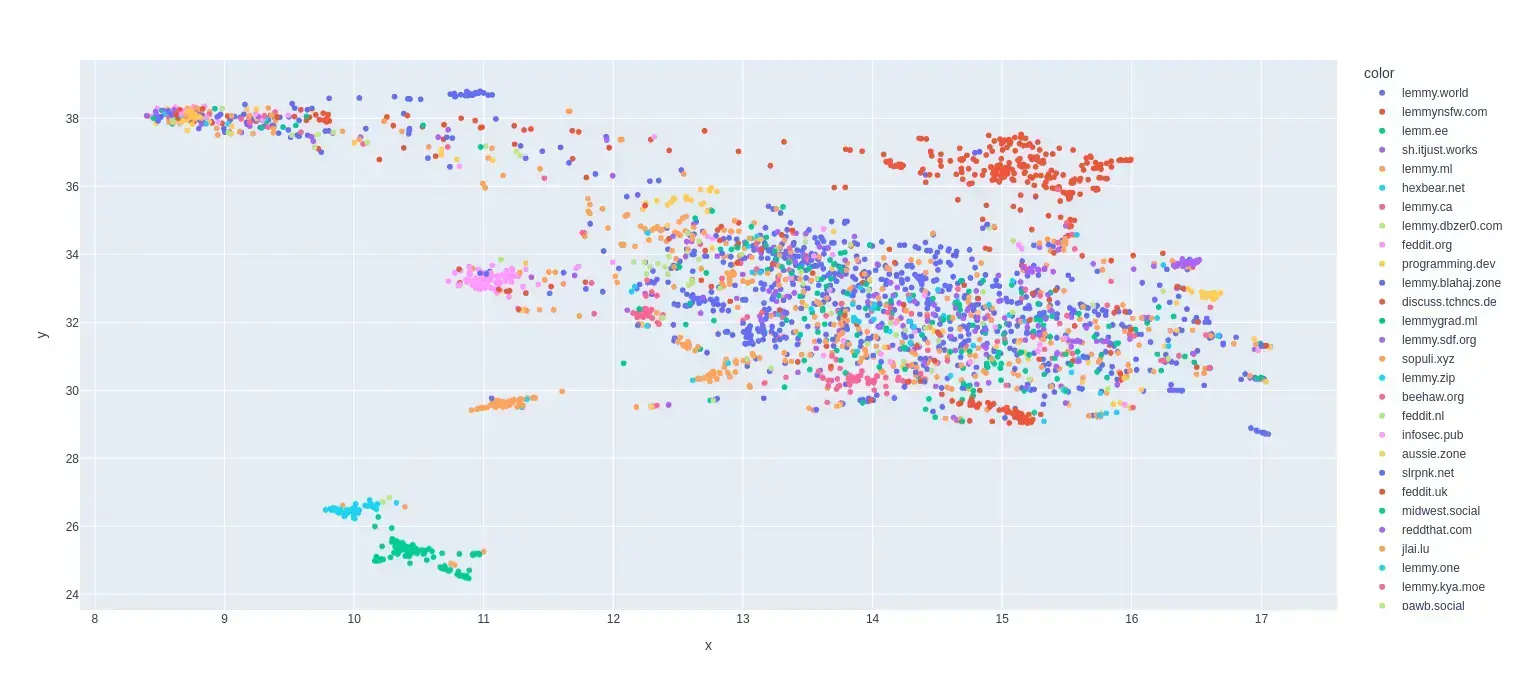

The point is to pick out the users that only like to pick fights or start trouble, and don’t have a lot that they do other than that, which is a significant number. You can see some of them in these comments.

Ok then that makes sense on why you chose these specific mechanics for how it works. Does that mean hostile but popular comments in the wrong communities would have a pass though?

For example let’s assume that most people on Lemmy love cars (probably not the case but lets go with it) and there are a few commenters that consistently shows up in the [email protected] or [email protected] community to show why everyone in that community is wrong. Or vice a versa

Since most people scroll all it could be the case that those comments get elevated and comments from people that community is supposed to be for get downvoted.

I mean its not that much of a deal now because most values are shared across Lemmy but I can already see that starting to shift a bit.

I was reminded of this meme a bit

Initially, I was looking at the bot as its own entity with its own opinions, but I realized that it’s not doing anything more than detecting the will of the community with as good a fidelity as I can achieve.

Yeah that’s the main benefit I see that would come from this bot. Especially if it is just given in the form of suggestions, it is still human judgements that are making most of the judgement calls, and the way it makes decisions are transparent (like the appeal community you suggested).

I still think that instead of the bot considering all of Lemmy as one community it would be better if moderators can provide focus for it because there are differences in values between instances and communities that I think should reflect in the moderation decisions that are taken.

However if you aren’t planning on developing that side of it more I think you could probably still let the other moderators that want to test the bot see notifications from it anytime it has a suggestion for a community user ban (edit: for clarification) as a test run. Good luck.

Yeah it is totally valid. Actually just came across someone that was talking about something similar to this.

https://youtu.be/S1ypWcqnojM

Edit: The main idea was that we as humans tend to get trapped in something called progress traps where as we advance technology we use that advance to over exploit our environment leading us to more problems down the line.

Anti Commercial-AI license (CC BY-NC-SA 4.0)